/08 — 2026

Mission-Driven Habitability

A TU Delft studio response to mission-driven confinement at Antarctica's Troll Station: a self-supporting Voronoi interior fit-out inside the standard living container, 3D-printed from the station's plastic waste, with AI-driven circadian lighting for the polar night.

- role

- Group work (7 members)

- location

- TU Delft

- org

- TU Delft — MSc Architecture, Urbanism and Building Sciences

- tutors

- Henriette Bier (course), Arwin Hidding, Vera Laszlo, Lisa-Marie Mueller

- tools

- Rhino · Grasshopper · Karamba3D · Python (scikit-learn) · Arduino / ESP32 · HTML/JS · 3D printing

Overview

Troll Station sits in the Norwegian sector of Antarctica; during the polar winter, occupants spend long stretches inside a standard container-based shell, cut off from daylight and from resupply. The studio brief asked how the interior — not the envelope — could carry habitability through this period. Our team framed a worst-case stress test: two researchers remain continuously inside a single container for seven days. The scenario isn't a literal prediction — it is a way to surface the spatial, environmental and psychological demands that a normal-operation brief would hide.

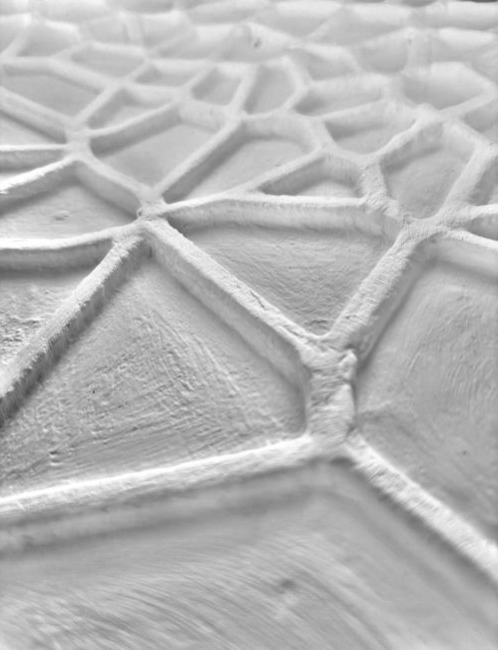

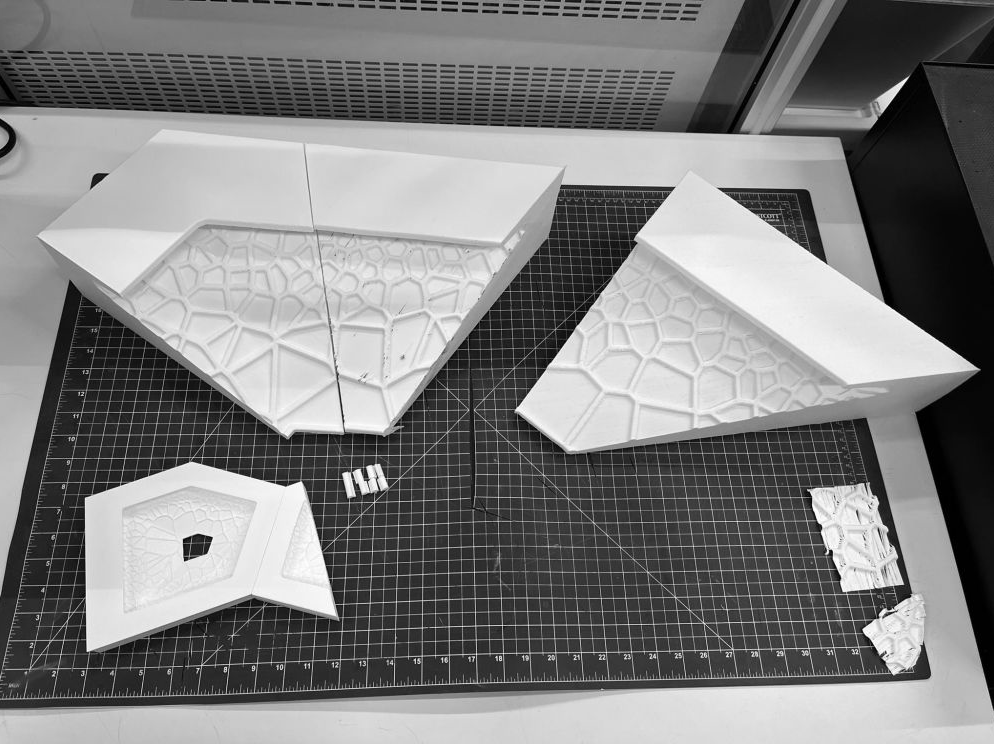

Three criteria structured the proposal: agency (users can modify the space in response to changing routines), privacy (retreat remains possible under constant co-presence), and sleep (rest is treated as a spatial and environmental condition, not just a timetable). A self-supporting Voronoi fit-out with integrated foldable furniture absorbs routine changes; panels are 3D-printed from the station's own plastic waste; an AI model adjusts illuminance and correlated colour temperature in response to circadian logic, weather data, and selected physiological signals.

Geometry was developed in Grasshopper — the orthogonal container order preserved, the Voronoi infill used to articulate zones at room scale and acoustic / structural texture at panel scale. The lighting model was trained in Python with scikit-learn on a 70 / 30 split (~93% accuracy for illuminance, ~81% for CCT). Its predictions feed a Grasshopper visualisation and an ESP32 / Arduino prototype, driven either by a pre-computed 24-hour sequence or live from an HTML PhysioApp interface that lets the environment either mirror a detected state or compensate for it.

Scenario — seven days indoors

The design targets the most severe winter interval: continuous darkness, restricted external mobility, and the longest gap between resupply missions. Within that frame, two occupants share a single container for seven days without leaving. The scenario is not a prediction — it's a stress test that exposes the spatial, environmental and psychological demands of confinement that a 'normal-operation' brief would hide.

From AI output to LED input

The trained model emits correlated colour temperature (kelvin) and illuminance (lux) — environmentally meaningful, but not directly consumable by an LED strip. A translation chain converts CCT → RGB and illuminance → brightness, bounds CCT to the 2700–6500 K window, and couples the two channels so colour temperature and intensity stay correlated. Across a 24-hour cycle the red channel stays structurally high; what changes is the balance of blue and green.

Setting A — scripted 24-hour cycle

A precomputed CSV of R, G, B, brightness rows is hard-coded into the Arduino sketch; the loop steps through one row every 3 s, compressing a full day into roughly 4 min 27 s.

Setting B — live control

The ESP32 exposes HTTP endpoints on a local Wi-Fi network; the HTML PhysioApp posts JSON (preset, activity, response mode), the sketch parses it, scales RGB by brightness, and updates the strip.

Mirror or compensate

Presets (calm, focus, stress, overload) × activities (sleep, eat, leisure, work) feed a single luminous output. Mirror reflects the detected state; compensate counterbalances it — a corrective rather than mimetic response.

Limitations & ethics

The seven-day scenario is a stress test, not an in-situ validation; the predictive model runs on a curated dataset rather than live Antarctic data; the prototype demonstrates translation at panel scale, not the full habitat over long-duration occupation. More importantly, a system that watches the body to adjust the environment is not ethically neutral — even when it reduces manual control, it normalises continuous physiological monitoring inside a domestic setting. Consent, transparency, data residency, and a clear boundary between support and regulation are open questions the design doesn't yet answer.

Demo videos

Team

- Giorgia VercelloniGroup member

- Maciej SachseGroup member

- Floruț RuxandraGroup member

- Zuzanna SchleiferGroup member

- Brendan ExterkateGroup member

- Gabriel MarksGroup member

- Wong Long KiGroup member